Key Points

- Orbital AI is emerging as a potential solution to the growing AI infrastructure bottlenecks on Earth.

- Nvidia is using its AI ecosystem, space-focused hardware, and aerospace partnerships to push into space-based AI computing, while AMD is leaning on its history of radiation-tolerant chips and flexible open-architecture designs.

- Although both chipmakers are positioning themselves for the orbital AI race, the technology remains in its early stages, with major challenges still limiting large-scale adoption.

The battle for AI dominance has taken off – figuratively and now literally. Yet here we are left wondering whether Earth has enough resources to support it.

Our planet is, at this point, blocking AI expansion with several hurdles. The most pressing issue is the lack of available power and water.

These AI bottlenecks and shortages are real, which means the threat of delayed or postponed data-center construction is also real.

But there’s one place where these bottlenecks could prove to be eliminated. Space.

Orbital AI has arrived, which means that space data centers are on the way. And they’re going to need chips – tons of chips – to operate.

That means chip titans Nvidia (NVDA) and Advanced Micro Devices (AMD) are likely headed toward a battle for space-chip supremacy.

AI’s Bottlenecks on Earth Make Orbital AI a Necessity

My colleague Steven Longenecker recently wrote an in-depth analysis of Orbital AI (it’s a must-read).

In it, he stated:

Most folks never think about what happens behind the scenes when you ask an AI chatbot like ChatGPT or Claude a question…

With every single query, a data center somewhere fires up a rack of chips to generate your answer. That takes power – a lot of power. AI is one of the most resource-intensive industries ever built…

All that computing power must live somewhere – and get power from somewhere.

It’s clear that Earth’s abundant resources are simply not abundant enough to handle the insatiable needs of AI computing. So, one logical solution is to put AI above Earth’s atmosphere and into orbit.

Orbital AI: How Space-Based AI Computing Is Changing Semiconductors

Traditional satellites primarily relay data back to Earth. Orbital-AI satellites, however, process data onboard in real time, reducing latency and limiting massive downlinks.

Much of what we do in 2026 relies on satellites. They enable worldwide communication through the internet, phone, and television. They are used to predict the weather and watch for environmental changes and natural disasters. Satellites also enable GPS technology that provides the precise location data we need for navigation.

Orbital AI changes the way satellites are used. Rather than simply amplifying and relaying information, orbital-AI satellites will actually process data and perform real-time inference in space. In other words, these satellites will compute rather than just relay.

Processing data on the satellite itself reduces latency by eliminating what would otherwise require constant, and extremely large, downlinks to Earth. This is a huge time-saver.

And it has a major impact on how semiconductors are designed. Rather than being built solely with speed and performance in mind, chips for orbital AI must also consider power efficiency, thermal management, and overall tolerance to space conditions, such as radiation exposure.

Are Nvidia and AMD ready for the challenge of building space-friendly AI chips?

Nvidia’s Launch Into the AI Space Race

Orbital-AI computing – which is essentially edge computing in space – has clearly been on Nvidia’s radar for quite some time. Especially when you consider the technology it has developed for these specific AI chips.

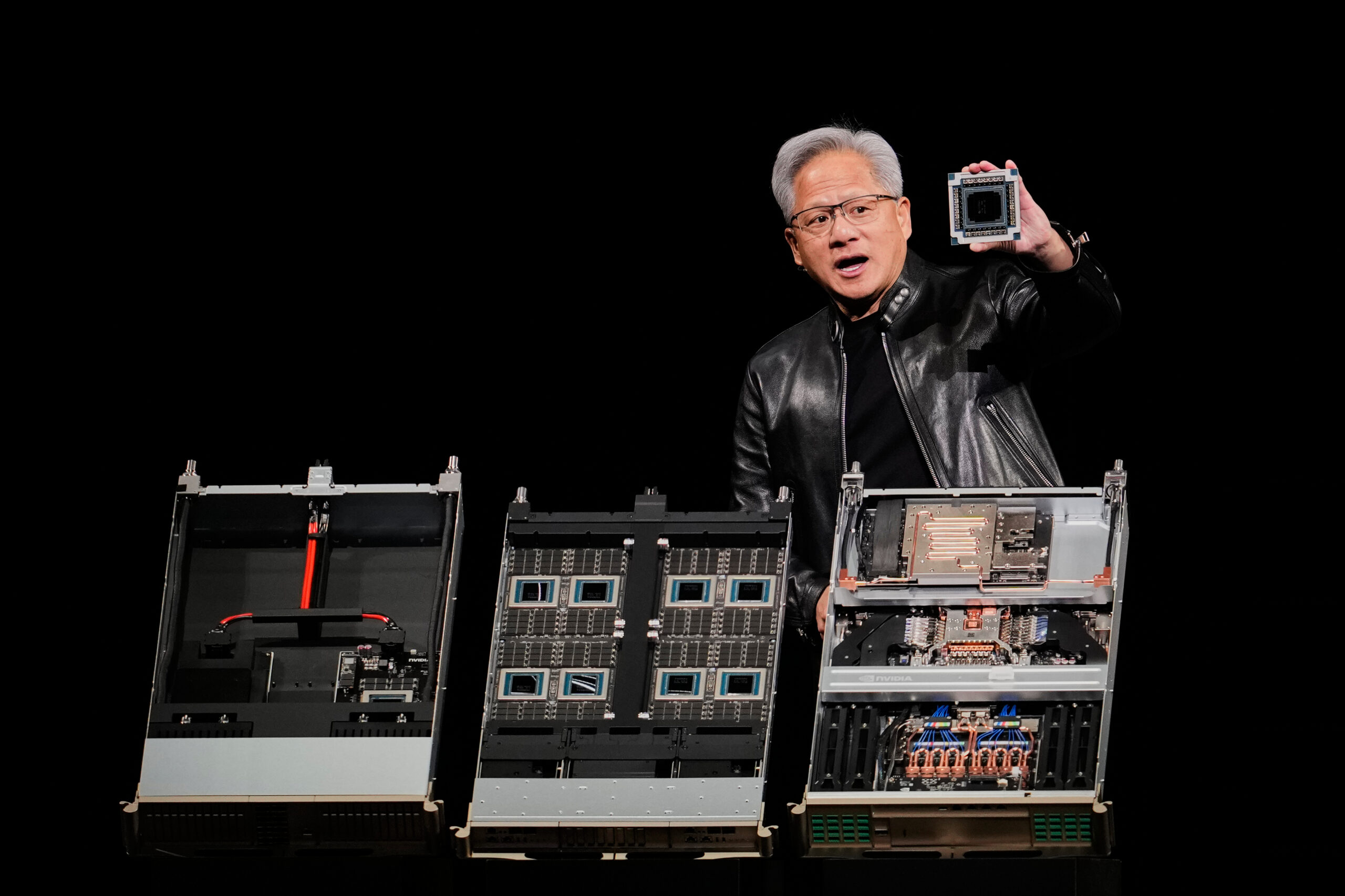

Nvidia recently announced that it sent AI chips into orbit. The company’s news release from March 16 stated:

By bringing data-center-class performance to size-, weight-, and power (SWaP)-constrained environments, Nvidia is enabling AI applications to operate seamlessly from ground to space, and space to space, while supporting increasingly complex mission profiles.

Nvidia’s Space-AI Technology

Edge computing and processing are at the core of Nvidia’s (and rival AMD’s) space-AI strategy. And the company has developed the components to make that a reality.

The Space-1 Vera Rubin module combines Nvidia’s powerful Vera central processing unit (“CPU”) and Rubin graphics processing unit (“GPU”) to “double the efficiency” and deliver “50% faster performance than traditional data center CPUs.” This enables large-language models (“LLMs”) to operate directly in space by processing massive data streams from space in real time.

Because the platform uses Nvidia’s Compute Unified Device Architecture (“CUDA”) ecosystem, AI models developed on Earth can be deployed in orbit with minimal modification.

This is important from a technological standpoint. But it’s also a brilliant strategic move by Nvidia because using the Space-1 Vera Rubin module expands Nvidia’s ecosystem and creates a level of developer lock-in.

Working in tandem with the Space-1 Vera Rubin are:

- Nvidia IGX Thor, which offers the industrial-grade durability needed to withstand space’s harsh conditions while also supporting real-time AI processing.

- Jetson Orin, which provides high-performance AI inference and enables real-time processing of vision, navigation, and sensor data directly on-board spacecraft, reducing latency and optimizing bandwidth.

Partnerships

To make Orbital AI a reality, Nvidia must work with partners to handle satellite components, orbital infrastructure, and space launches. So far, the company has covered its bases accordingly.

- Aetherflux: This space data-center startup is constructing a constellation of satellites that will house Nvidia’s Space-1 Vera Rubin modules and serve as an orbital-AI data center. The project is scheduled to launch in the first quarter of 2027.

- Starcloud (formerly Lumen Orbit): The satellite company is building and deploying small, solar-powered satellites that use Nvidia GPUs to process data in orbit.

Nvidia has also partnered with several other aerospace and satellite companies to expand orbital-AI infrastructure.

Nvidia’s partnership with Starcloud is especially notable. Last November, the company launched the first satellite equipped with an Nvidia H100 GPU while testing the durability and efficiency of Nvidia’s component in space conditions.

Starcloud showed that it is possible to train AI models in low-Earth orbit (“LEO”) using Nvidia’s technology. But Nvidia must first overcome some major hurdles to make orbital AI a near-term reality.

Environmental, Structural, and Financial Constraints

While there are advantages to establishing AI data centers in space, the environment is not one of them.

For orbital AI to become a long-term reality, Nvidia – and other companies – will need to solve a host of structural and environmental constraints to accommodate LEO’s harsh conditions.

First, there’s thermal management. AI GPUs run extremely hot, making some type of cooling system a must to ensure the chips don’t melt. In space, without any air, that cooling comes from rejecting waste heat through thermal radiation in the vacuum of space (which consumes no water whatsoever).

But it does require huge, heavy, and expensive heat-sink panels. And heavy equipment, such as the panels, increases launch costs.

An alternative solution – or a complementary one – may involve limiting the power (up to 700 watts) used by these high-performance GPUs to avoid overheating.

Which leads to the next challenge… power scarcity.

Yes, there is limitless solar energy access in space. However, converting it into the immense – and constant – amount of power that AI needs to operate also requires very large solar-panel arrays and bulky batteries, which would also add to launch costs.

Finally, any equipment sent into LEO must withstand intense and dramatic temperature changes, the damaging effects of space radiation, and the dangers posed by space debris and traffic.

As orbital AI expands, Nvidia will certainly be a major player to watch.

AMD May Have Early ‘Edge’ in Orbital AI

It’s too early to proclaim a front-runner in the orbital-AI sphere, but AMD has put itself in an excellent position to pull ahead. Here’s why.

Space Experience

AMD brings extensive experience creating products designed for space.

The company already offers an impressive portfolio of space-grade products, including:

- XQR Versal system-on-chip (“SoC”) devices, designed specifically to equip satellites and spacecraft with AI inference. These SoCs process data on board for faster inference.

- RT Kintex UltraScale and Virtex field-programmable gate arrays (“FPGAs”), built to withstand space radiation and allow for image and signal processing in space.

AMD’s space-grade components go through rigorous testing to ensure they can withstand the harsh conditions of space – especially radiation – for up to 15 years.

Open Architecture and Edge-First Computing

Rather than adapting orbital-AI chips originally designed for terrestrial use, AMD develops chips specifically for edge environments like space.

For example, AMD’s combination of CPUs, GPUs, and SoCs on one chip – referred to as “heterogeneous computing” – optimizes specific tasks instead of relying primarily on power-hungry GPU inferencing. This energy-efficient design enables the chips to operate autonomously, making them ideal for long-term space missions in edge environments.

And unlike Nvidia’s closed CUDA environment, AMD’s Versal AI and RF series orbital chips offer open-architecture computing that focuses on adaptability and power efficiency for extreme edge environments.

This type of open platform, which relies on reprogrammable SoCs and FPGAs, allows operators access to update or change AI models and functionality at any time after launch.

That accessibility and reprogrammability set AMD apart from its competitors, whose AI chips often operate within a closed loop.

AMD still has a long way to go to catch up to Nvidia in total chip market share. But the company has a history of developing innovative space-ready technology that may give it an early edge over Nvidia in orbital AI.

Are SpaceX’s AI Chips a Viable Threat to AMD and Nvidia?

Elon Musk, with his massive Terafab project, is aiming to build custom AI GPUs in-house for Tesla’s Full Self-Driving (“FSD”) vehicles, Optimus humanoid robots, SpaceX satellites, and orbital-AI data centers.

Terafab would keep all chip production under one roof (or, at least, localized). And that would ideally eliminate the bottlenecks that come with reliance on third-party suppliers.

More importantly, with SpaceX customizing its own GPUs and chips, it can create the exact components its Starlink satellite constellation needs for optimal performance – all while doing it at what should be a drastically lower cost.

But before we get too carried away by Musk’s overexcitement, let’s remember that construction on the huge Terafab facility in Texas is barely underway. Chips probably won’t be produced there for at least another year, and more likely in 2028.

Should Nvidia and AMD be worried? Not yet. Both companies have a multiyear head start in orbital AI over Musk and SpaceX. And with Terafab not even close to completion, any suggested timeline right now is mere speculation. But Musk is aiming for them.

Speed vs. Durability: The Massive Challenges of Computing in Space

Recognizing the challenges and limitations of computing in space is the first step toward developing solutions. And computing in space is a much different ball game than it is down here on Earth.

For example, in space, perhaps the most critical principle is this: Performance per watt is more important than raw performance.

The reason is those tight SWaP constraints. Every additional watt requires more solar and radiator panels, which significantly increases costs.

The result is a difficult balance between size and power. Satellites generate a fraction of the megawatts produced by terrestrial-AI data centers. So, power per watt becomes a critical measure in space. And that requires the right combination of size and power.

Then there’s the thermal-management issue. It’s already a problem on Earth, but for different reasons than it is in space. On land, AI data centers require massive amounts of water to cool their equipment and prevent overheating.

There’s no water in space, so none is needed to cool the hot-running chips on satellites. But there’s also no air in the vacuum of space to take away the heat, so convection cooling is impossible. Still, those chips must be cooled. And in space, that’s achieved through thermal radiation.

The problem is that radiation is a slower, less efficient form of heat transfer than convection. That’s why large radiation panels are a necessity for satellites carrying AI chips.

For orbital-AI purposes, the radiation panels on every satellite must remain cool. And that requires a massive surface area to reject the thermal heat.

All of this begs the question: Are either Nvidia or AMD able to overcome these hurdles to successfully launch data centers in space?

Not quite yet, but they’re getting closer.

Nvidia, as noted earlier, has created its Space-1 Vera Rubin module specifically for data centers, with up to 25 times more AI compute for inferencing in space than the H100 GPU that launched in November.

And its Jetson Orin and IGX Thor platforms were designed with efficiency in mind to handle edge computing in orbit.

AMD, on the other hand, is relying on its aerospace history to develop orbital-AI chips. Its Versal SoCs and radiation-tolerant FPGAs have already succeeded in space aboard the Mars Perseverance rover and on NASA’s NISAR mission.

Plus, AMD builds its systems specifically for edge processing in space so they can effortlessly process huge amounts of data aboard a satellite or spacecraft. The company’s modular GPUs, CPUs, and AI accelerators also enable components to be replaced or upgraded without creating brand-new systems.

As it stands today, Nvidia has the components necessary for smaller edge-AI workloads in LEO. And AMD offers the technology to handle longer missions not only in LEO but also in deep space aboard spacecraft.

Which Stock Is the Better ‘Space-Chip Play’ for Your Portfolio?

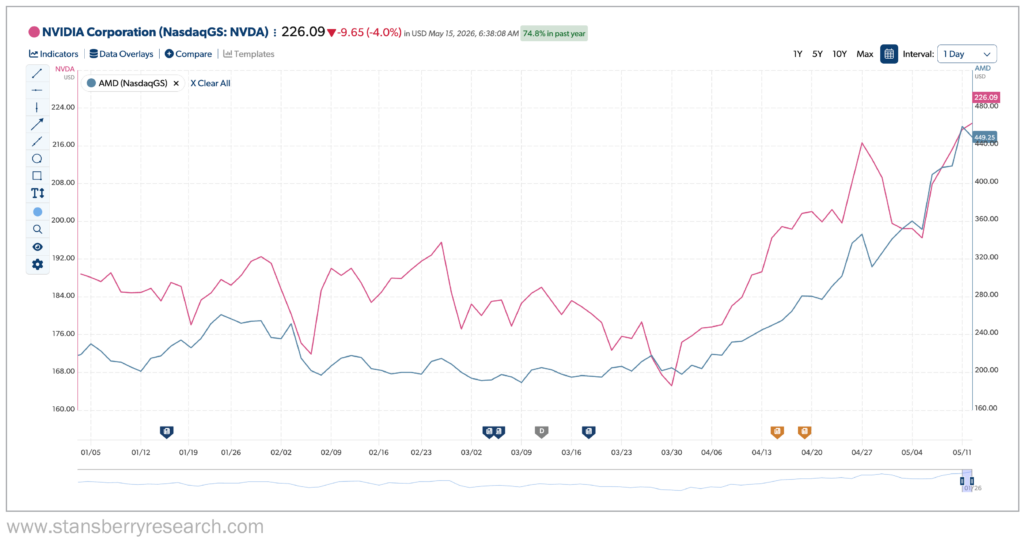

Right now, it’s hard to go wrong with either Nvidia or AMD. Since the start of the year, AMD has grown significantly – its share price has more than doubled. Nvidia’s 2026 increase has been more incremental, up nearly 17%, as of Wednesday’s close.

As far as space is concerned, however, there’s no clear winner… yet.

Each chipmaker is approaching the space race in a different way – Nvidia with its closed ecosystem and high-performance AI chips and platforms, and AMD with its history in space and durable, efficient components.

But until orbital-AI data centers are established and operational, there’s no real way of knowing how this competition for extraterrestrial-AI dominance will unfold.

What we do know now is that space-based AI is not currently driving much revenue for either Nvidia or AMD.

At some point, however, it likely will. In the meantime, investors should watch for future announcements of partnerships between chipmakers and companies specializing in aerospace technology and deployment, as well as any further launches of AI infrastructure into orbit.

These will be sure signs of progress and will signal how close orbital AI is to becoming a reality. In the short term, any investments in AMD or Nvidia should be driven largely by the ongoing, intense demand from terrestrial AI, not by what may eventually happen in space.

Regards,

David Engle

Editor’s Note: SpaceX recently filed plans for what could become the largest AI infrastructure project in human history: up to 1 million orbital data-center satellites powered by the sun. Jeff Brown says this “Orbital AI” breakthrough could create the next generation of AI fortunes — potentially even bigger than Nvidia. He’s revealing the company he believes is best positioned to profit in this free video presentation.

Recent Articles

What Is Luke Lango’s “Bank of Elon” Prediction… And Should Investors Move Their Money Now?

Why Alphabet Pivoted to Massive $80 Billion Stock Sale to Fund AI Build-Out – With Berkshire’s Help